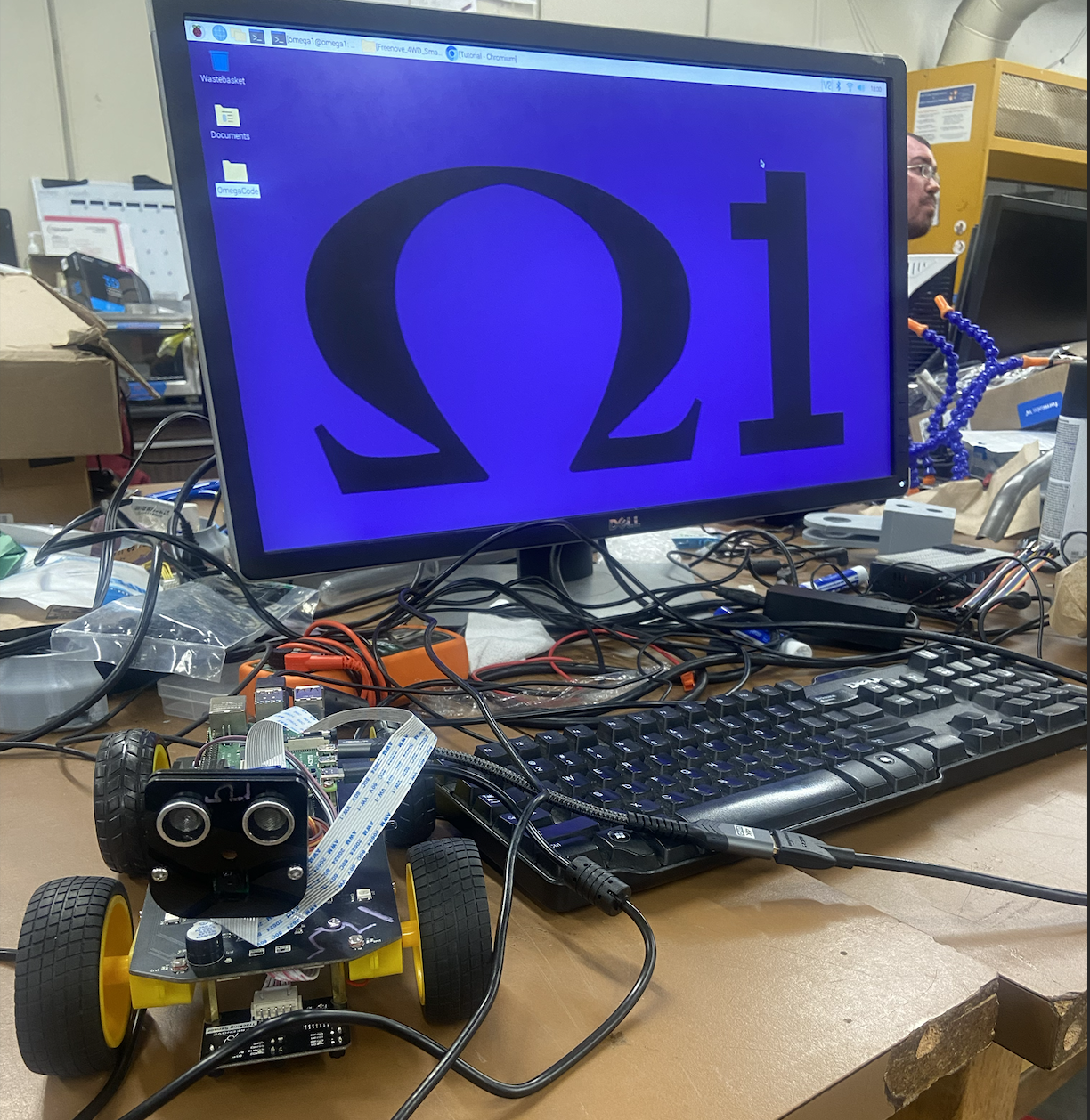

Omega-1 — Autonomous Robotics Platform

A fully operational autonomous robot running on Raspberry Pi 4B — drives, navigates, streams live video, and tracks its own position in real time. Controlled from a custom web dashboard with Xbox controller support, live sensor feeds, and a mission control map.

Built as a distributed system across three machines: the Pi handles real-time motor control and sensor fusion, a web UI runs on any laptop with no robot software installed, and an NVIDIA Jetson Orin handles GPU-accelerated object detection — all communicating over a VPN-secured network.

- Drives autonomously with obstacle avoidance — ultrasonic sensor triggers deceleration, pivot, and heading recovery without stopping abruptly

- Estimates its own position using a Kalman filter that fuses dead-reckoning (motor commands) with visual landmark corrections (ArUco markers) — no GPS required

- Streams live camera video to the browser with under 100 ms latency via a custom MJPEG proxy

- Runs a full software simulation mode: the robot's physics and sensor geometry are modeled so the entire stack can be developed and tested without hardware

- Detects objects with YOLOv8 on the Jetson Orin and relays results back to the Pi in real time over a compressed image stream (~500 KB/s vs 9 MB/s raw)

- Solved a hardware bug where the motor driver corrupted servo signals at power-on — traced to a shared PWM clock; fixed with register verification and hard pulse clamping on every write

- Eliminated ~240 unnecessary HTTP requests/minute from the frontend by introducing circuit breakers, shared polling contexts, and WebSocket health checks

- Stack: Python · Go · TypeScript · React · Next.js · FastAPI · ROS2 · OpenCV · WebSockets · Docker · TailwindCSS

- Status: Fully operational — live teleoperation, sensor streaming, localization, and multi-machine communication all running.